I intended to be more casual about these comics when I resumed reading them for their mathematics content. I feel like Comic Strip Master Command is teasing me, though. There has been an absolute drought of comics with enough mathematics for me to really dig into. You can see that from this essay, which covers nearly a month of the strips I read and has two pieces that amount to “the cartoonist knows what a prime number is”. I must go with what I have, though.

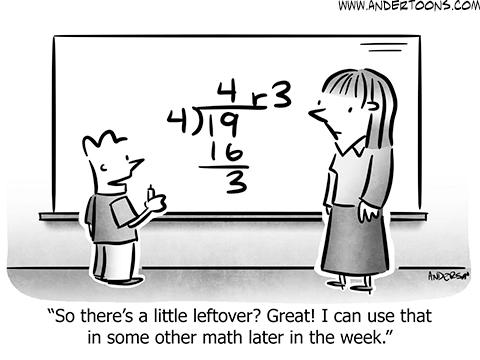

Mark Anderson’s Andertoons for the 12th of May I would have sworn was a repeat. If it is, I don’t seem to have featured it before. It gives us Wavehead — I’ve learned his name is not consistent — learning about division. The first kind of division, at least, with a quotient and a remainder. The novel thing here, with integer division, is that the result is not a single number, but rather an ordered pair. I hadn’t thought about it that way before, I suppose since integer division and ordered pairs are introduced so far apart in one’s education.

We mostly put away this division-with-remainders as soon as we get comfortable with decimals. 19 ÷ 4 becoming “4 remainder 3” or “4.75” or “4 $latex\frac{3}{4} $” all impose a roughly equal cognitive load. But this division reappears in (high school) algebra, when we start dividing polynomials. (Almost anything you can do with integers there’s a similar thing you can do with polynomials. This is not just because you can rewrite the integer “4” as the polynomial “f(x) = 0x + 4”.) There may be something easier to understand in turning into

remainder

.

A thing happening here is that integer arithmetic is a ring. We study a lot of rings, as it’s not hard to come up with things that look like addition and subtraction and multiplication. Rings we don’t assume to have division that stays in your set. They can turn into pairs, like with integers or with polynomials. Having that division makes the ring into a field, so-called because we don’t have enough things called a “field” already.

Scott Hilburn’s The Argyle Sweater for the 16th of May is one of the prime number strips from this collection. About the only note worth mention is that the indivisibility of 3 depends on supposing we mean the integer 3. If we decided 3 was a real number, we would have every real number other than zero as a divisor. There’s similar results for complex numbers or polynomials. I imagine there’s a good fight one could get going about whether 3-in-integer-arithmetic is the same number as 3-in-real-arithmetic. I’m not ready for that right now, though.

I like the blood bag Dracula’s drinking from. Nice touch.

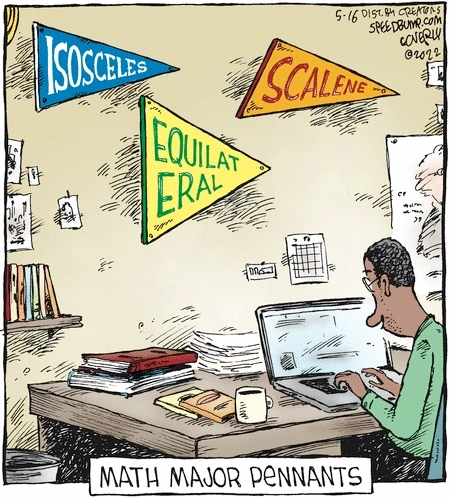

Dave Coverly’s Speed Bump for the 16th of May names the ways to classify triangles based on common side lengths (or common angles). There is some non-absurdity in the joke’s premise. Not the existence of these particular pennants. But that someone who loves a subject enough to major in it will often be a bit fannish about it? Yes. It’s difficult to imagine going any other way. You need to get to a pretty high leve of mathematics to go seriously into triangles, but the option is there.

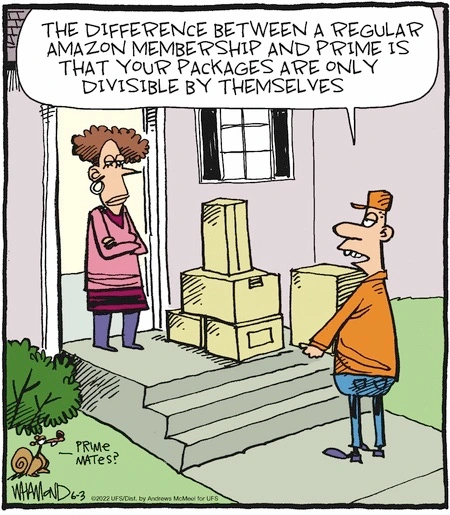

Dave Whamond’s Reality Check for the 3rd of June is the other comic strip playing on the definition of “prime”. Here it’s applied to the hassle of package delivery, and the often comical way that items will get boxed in what seems to be no logical pattern. But there is a reason behind that lack of pattern. It is an extremely hard problem to get bunches of things together at once. It gets even harder when those things have to come from many different sources, and get warehoused in many disparate locations. Add to that the shipper’s understandable desire to keep stuff sitting around, waiting, for as little time as possible. So the waste in package and handling and delivery costs seems worth it to send an order in ten boxes than in finding how to send it all in one.

It feels like an obvious offense to reason to use four boxes to send five items. It can be hard to tell whether the cost of organizing things into fewer boxes outweighs the additional cost of transporting, mostly, air. This is not to say that I think the choice is necessarily made correctly. I don’t trust organizations to not decide “I dunno, we always did it this way”. I want instead to note that when you think hard about a question it often becomes harder to say what a good answer would be.

I can give you a good answer, though, if your question is how to read more comic strips alongside me. I try to put all my Reading the Comics posts at this link. You can see something like a decade’s worth of my finding things to write about students not answering word problems. Thank you for reading along with this.

![Kid: 'I've taught Loco [the horse] how to add!' Dad: 'You couldn't teach that stupid horse to come in out of the rain, let alone add.' Kid: 'He can too add! Just watch! OK, Loco, how much is two plus two?' Loco taps his foot four times. 'Four taps!' Dad: 'See! I told you he was a stupid horse!'](https://nebusresearch.wordpress.com/wp-content/uploads/2019/08/redeye_gordon-bess_18-august-2019.gif?w=840&h=380)

![Offscreen voice: 'So a pizza sliced into fourths has ... ' Paige: '90 degrees per slice.' Voice: 'Correct! And a pizza sliced into sixths has ... ' Page: '60 degrees per slice.' Voice: 'Good! And a pizza sliced into eighths has ... ' Paige: '45 degrees per slice.' Voice: 'Yep! I'd say you're ready for your geometry final, Paige.' Paige: 'Woo-hoo!' Voice, revealed to be Peter: 'Now help me clean up these [ seven pizza ] boxes.' Page: 'I still don't understand why teaching me this required *actual* pizzas.'](https://nebusresearch.wordpress.com/wp-content/uploads/2019/05/foxtrot_bill-amend_19-may-2019.jpeg?w=840&h=443)

![Caption: Eric had good feelings about his date. It turned out, contrary to what he first thought ... [ Venn Diagram picture of two circles with a good amount of overlap ] ... They actually had quite a lot in common.](https://nebusresearch.wordpress.com/wp-content/uploads/2019/03/eric-the-circle_janka_19-march-2019.jpeg?w=840)

![Colin: 'These math problems are impossible!' Dad: 'Calm down, I'll help you think it through. [ Reading ] Dot has 20 gumballs. Jack has twice as many, and Joe has a third as many as Jack. If Dot gives Jack half her gumballs, and Jack chews half the new amount, how many will the teacher have to take away for all the kids' gumballs to be equal?' ... Now I see why people buy the answers to these things on the Internet!' Colin: 'I'm never going to harvard, am I?'](https://nebusresearch.wordpress.com/wp-content/uploads/2019/03/edge-city_terry-laban-patty-laban_12-march-2019.gif?w=840&h=261)

![Student looks at a quiz. It's full of expressions, presumably to simplify, such as [a^2 b^2/16m] x [(m^2(25^2)x24m) / (16^{-2} \delta m]. He prays. There's tapping at the window. God appears, in a tree, pointing to an answer key. The teacher runs over, 'Hey!', scaring God out of the tree.](https://nebusresearch.wordpress.com/wp-content/uploads/2019/02/the-other-end_neil-kohney_1-february-2019.gif?w=840)